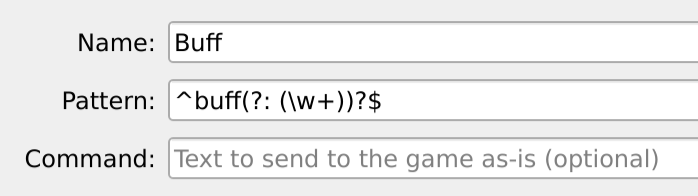

Individual who uses a screen reader everyday, all the time, to use theirĬomputer, their phone, their watch, their television, etc. How familiar are you with screen reading technology? Because wearing aīlindfold is not going to provide you the same experience as a blind I've already started but do I have the go ahead to implement this? I've never done a software bounty and want some kind of assurance that I'm following protocol and that I'll be able to collect. Ctrl-R or something.Įach host can have a different voice configured. Perhaps there should be a way to restart playback for all text that has arrived since the last send. Only the focused mud session tab is spoken Text is automatically spoken as it arrives from the server. TTS voices are specialized to specific locales. Also, the accessibility api will not allow you to configure different voices for different hosts which is really the correct thing to do considering Mudlet allows you to connect to multiple hosts with different nationalities and languages simultaneously. A generic play/pause feature would just be an awkward encumbrance. My thinking is that I should be able to sit with my hands on the keyboard and wear a blindfold and play the mud by listening instead of looking. First and foremost this should be playable. The accessibility API is not going to give the right user experience for the mud sessions. If you press any key with the input box focused it immediately stops the text playback. I have it speaking text as it appears on the screen. We're new to developer bounties and this is our first foray into it - so we expect a few bumps along the road :) This issue will be considered closed when at least 2 visually impaired users sign off on usability. Thus this shall be implemented using Qt's accessibility framework as that will automatically handle the OS-specific details for us: Extra information, such as Mudlet version, operating system and ideas for how to solve / implement: Steps to reproduce the issue / Reasons for adding feature:Įrror output / Expected result of featureĮxpected result is that NVDA on Windows, built-in macOS reader, KDE and Gnome's accessibility are able to read text as it comes from the game and the widget is navigatable (as in, to go back to read text) in a standard way - as an impaired player would expect. This issue is about adding support to it: the TConsole / TTextEdit classes. This is because menus and dialogs in Mudlet are standard Qt widgets which already have support for accessibility, whereas the game text widget is a handcrafted and very fast widget for rendering text - which does not have a11y support yet. Brief summary of issue / Description of requested feature:Ĭurrently when you use a screenreader, it is able to read menus and dialogs of Mudlet - but not the actual window where the game text is displayed.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed